Global AI Server Spending Jumps 45% in 2026, Reaching $312 Billion as Infrastructure Race Accelerates

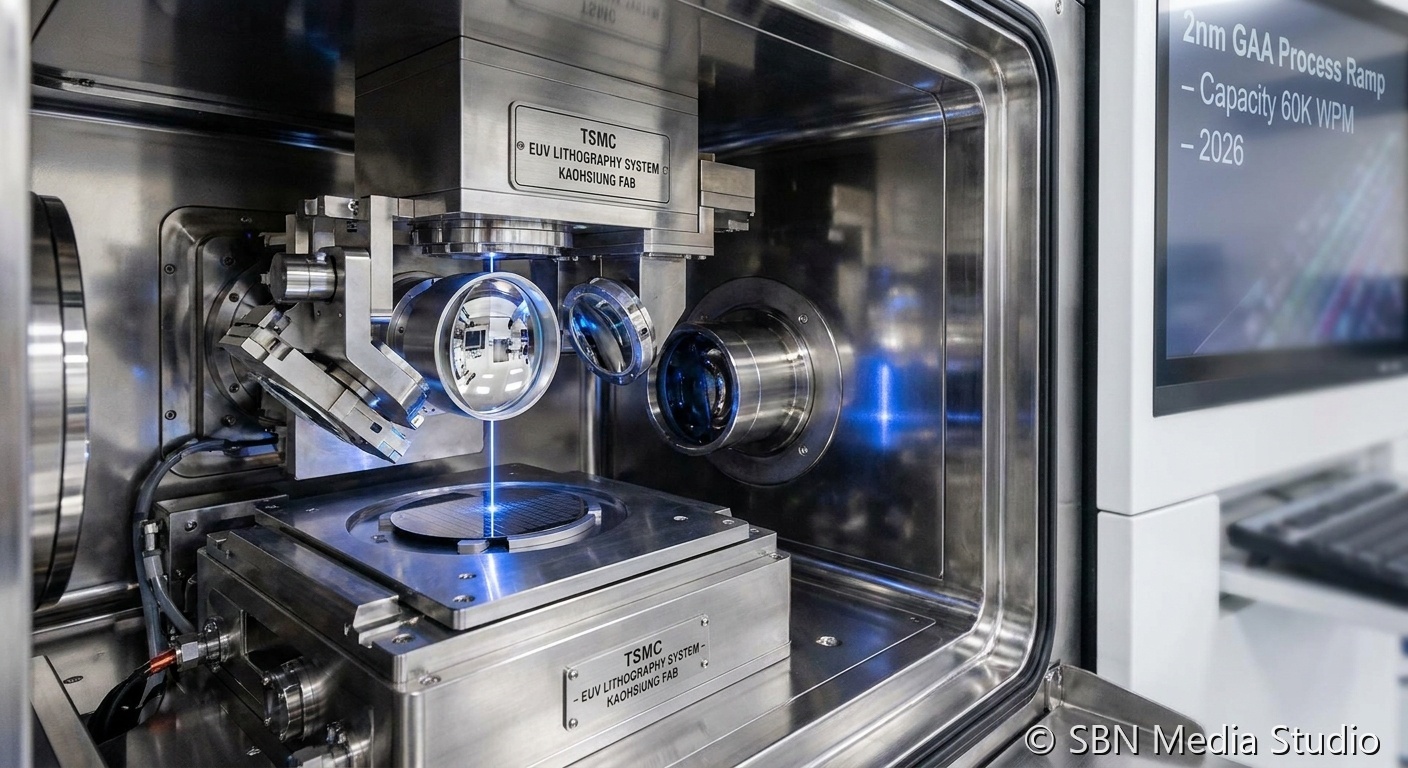

Capital expenditure on AI server infrastructure surged 45% year-over-year in 2026, reaching a projected $312 billion according to industry analysts. The spending boom is driven by hyperscale cloud providers racing to build the GPU clusters needed to train and serve increasingly large frontier models, as well as a new wave of enterprise data center construction for on-premises AI deployment. NVIDIA continues to capture the majority of AI accelerator revenue, though AMD and custom silicon from Google, Amazon, and Microsoft are gaining share. The scale of investment has sparked debate about whether the AI industry is building toward sustainable returns or inflating a speculative bubble. Supporters point to the rapid revenue growth at OpenAI and Anthropic as evidence that demand is real, while skeptics note that most enterprises have yet to demonstrate measurable ROI from their generative AI deployments. The spending trajectory is also reshaping the semiconductor supply chain, with TSMC and Samsung operating at full capacity on advanced process nodes.

The breakdown of the $312 billion in projected spending reveals the concentrated nature of the AI infrastructure buildout. Microsoft, Google, Amazon, and Meta alone account for approximately 65% of total AI server capital expenditure, with each company spending between $45 billion and $80 billion annually on data center expansion. Microsoft's spending is driven by its partnership with OpenAI and the massive compute requirements of Azure AI services, while Google is investing heavily in custom TPU v6 chips and the infrastructure to support Gemini's global deployment. Amazon's AWS division is building new AI-optimized data centers in eight countries, and Meta has committed to what it calls the "largest single AI infrastructure investment in history" to support its Llama model training and deployment across its family of applications. Beyond the hyperscalers, a new class of AI-focused data center companies has emerged, with startups like CoreWeave and Lambda Labs raising billions to build GPU-dense facilities.

The sustainability and energy implications of this infrastructure boom have become a major policy concern. AI data centers are projected to consume approximately 4.5% of total U.S. electricity generation by the end of 2026, up from less than 2% in 2023, according to the Department of Energy. This has prompted several states to reconsider their renewable energy mandates and grid capacity planning, with Virginia, Texas, and Georgia — the three largest data center markets — all facing significant power constraints. NVIDIA's dominance in AI accelerators remains firm, with its H200 and B200 GPUs commanding over 80% market share in training workloads, but the supply shortage has pushed lead times to four-to-six months and created a thriving secondary market where GPUs trade at significant premiums. The infrastructure race shows no signs of slowing, as each new generation of frontier models requires substantially more compute to train and serve than its predecessor.

Sources

Gartner, IDC, Financial Times