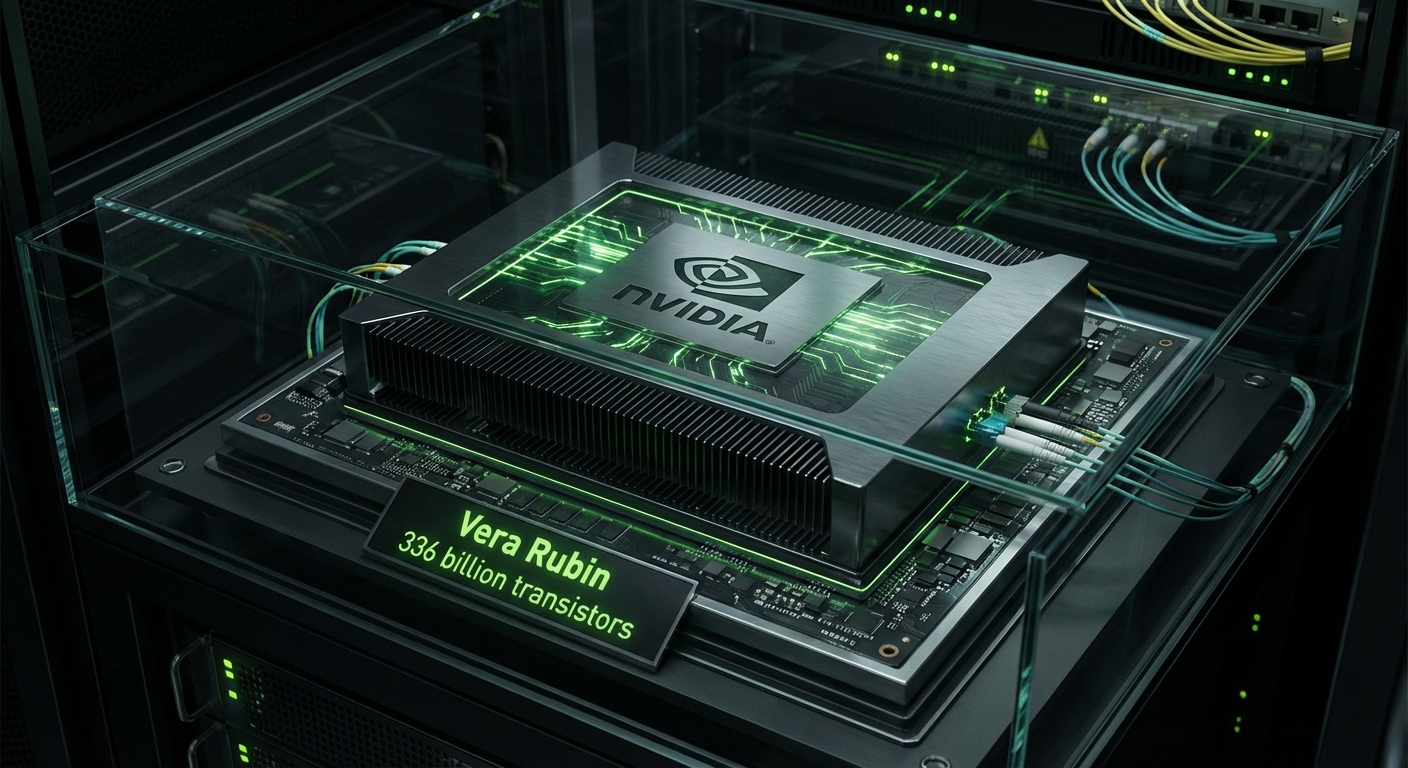

NVIDIA Unveils Vera Rubin at GTC 2026 — 336 Billion Transistors, $1 Trillion in Orders Through 2027

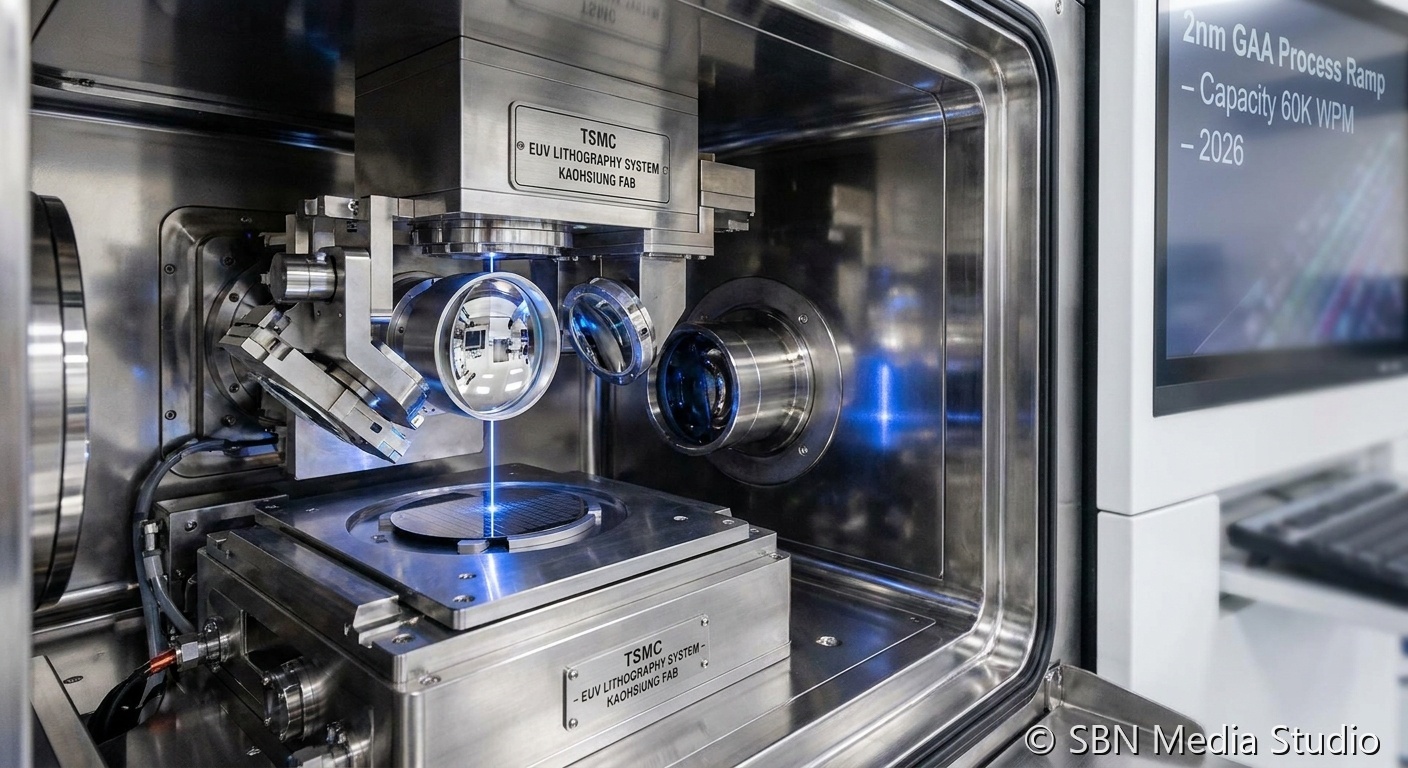

At GTC 2026, NVIDIA CEO Jensen Huang revealed the Vera Rubin platform, the successor to Blackwell, and projected $1 trillion in combined purchase orders for Blackwell and Vera Rubin through 2027 — double the $500 billion projection from just one year ago. The Rubin GPU is built on TSMC's 3nm process with a dual-die design containing 336 billion transistors, delivering 50 PFLOPS of inference compute and 35 PFLOPS for training. The sixth-generation NVLink provides 3.6 TB/s bandwidth per GPU, while the NVL72 rack delivers 260 TB/s — more than the entire internet's bandwidth. AWS, Google Cloud, Microsoft Azure, and CoreWeave are among the first to deploy Vera Rubin systems in H2 2026.

OpenAI, Anthropic, Meta, and xAI have all committed to the Rubin platform, with OpenAI alone reportedly ordering over 100,000 Rubin GPUs for its next-generation training infrastructure. The Rubin platform introduces a fundamentally new memory architecture featuring HBM4 from both SK Hynix and Micron, providing up to 288 GB of HBM per GPU — a 3x increase over Blackwell that enables trillion-parameter models to be loaded entirely in GPU memory without complex model parallelism schemes. Huang also unveiled Kyber, a 144-GPU rack architecture for Rubin Ultra in 2027 that will push rack-level compute to 1.4 exaFLOPS, rivaling today's largest supercomputers in a single rack.

The financial implications are extraordinary. NVIDIA's market capitalization briefly surpassed $5 trillion following the GTC keynote, making it the most valuable company in the world. Wall Street analysts have scrambled to raise price targets, with Bank of America setting a $280 target and citing "unassailable competitive moats in AI silicon." The Vera Rubin platform's 4x energy efficiency improvement over Blackwell is also strategically significant given the Iran conflict's impact on data center energy costs, as hyperscalers can deliver the same compute throughput with substantially lower power consumption. NVIDIA's dominance of the AI accelerator market appears more entrenched than ever, though AMD's MI400 and custom silicon from Google, Amazon, and Microsoft continue to nibble at the edges.

Sources

CNBC, NVIDIA Newsroom, Tom's Hardware, ServeTheHome