Data Centers in Space: The Physics, the Stocks, and the Companies Racing to Put AI in Orbit

SpaceX filed with the FCC on January 30, 2026, to launch up to one million satellites for a megaconstellation of orbital data centers — infrastructure designed to run advanced AI models beyond Earth's atmosphere. The filing, submitted just five days before it was accepted for public comment, describes a system that would 'operate a constellation of satellites with unprecedented computing capacity to power advanced artificial intelligence models.' Each satellite, internally called 'AI Sat Mini,' would stretch over 170 meters in length, carry massive solar arrays, and deliver 100 kilowatts of power to onboard AI processors running custom 'D3' chips designed for radiation resistance and high-temperature operation.

The concept isn't Musk's alone. OpenAI CEO Sam Altman has mused that space may be the long-term solution for AI compute. Jeff Bezos predicted 'giant gigawatt data centers in space' within 20 years. Former Google CEO Eric Schmidt acquired rocket manufacturer Relativity Space in a move linked to orbital computing. And NVIDIA CEO Jensen Huang declared at GTC 2026: 'Space computing, the final frontier, has arrived.' The orbital data center market, currently valued at approximately $1.77 billion, is projected to reach $39.09 billion by 2035 at a staggering 67.4% compound annual growth rate. But between today's ambitions and tomorrow's orbital compute farms lies an enormous gap — one defined by the unforgiving laws of physics.

THE PHYSICS CHALLENGE: WHY COOLING IN SPACE IS NEARLY IMPOSSIBLE

Here's the counterintuitive truth that most people miss: space is not cold in any useful sense for electronics. While the vacuum of space is technically near absolute zero, it acts as a near-perfect insulator. There is no air, no water, no medium to carry heat away. On Earth, data centers use massive air conditioning systems, water cooling loops, and even river water to dissipate heat. In orbit, the only mechanism available is thermal radiation — literally glowing heat into the void — governed by the Stefan-Boltzmann law. A data center trying to dissipate just 1 megawatt of waste heat into deep space needs a radiating surface of approximately 1,200 square meters (roughly 35 x 35 meters) to maintain chips within their operating temperature range. For context, the International Space Station's entire thermal control system — using a complex ammonia cooling loop and enormous radiator panels — can only dissipate 16 kilowatts. That's equivalent to about 16 NVIDIA H200 GPUs — barely a quarter of a single ground-based server rack. Scaling to megawatt-class AI workloads would require radiator arrays orders of magnitude larger than anything ever deployed in orbit.

THE ELECTRONICS CHALLENGE: RADIATION DESTROYS CHIPS

Earth's atmosphere shields terrestrial data centers from cosmic radiation and solar particle events. Orbital data centers have no such protection. High-energy particles constantly bombard electronics in low Earth orbit, causing two categories of damage. Single-event effects (SEE) include 'bit flips' — where a logical zero spontaneously becomes a one — corrupting data and crashing computations. Total ionizing dose (TID) gradually degrades semiconductor structures over months and years, eventually destroying chips entirely. Radiation-hardened processors exist, but they lag multiple generations behind commercial chips in performance, making them unsuitable for AI workloads. The alternative is triple modular redundancy (TMR) — running three identical systems in parallel and voting on correct outputs. But TMR means launching three copies of every chip, three times the cooling, three times the power, and three times the launch cost. Some forms of shielding actually make things worse: high-energy particles hitting a metal shield can create a shower of secondary particles that cause multiple simultaneous bit flips — far harder to correct than isolated errors.

THE VACUUM PROBLEM: NO FANS, NO CONVECTION, NO SHORTCUTS

Without air, there is no convection cooling inside a spacecraft. On Earth, we strap fans to motherboards and blow air across heat sinks. In vacuum, a fan is just a motor wasting watts. Heat must travel through conduction — physical contact between components and cooling plates — to reach external radiators. Every thermal joint, every interface, every cable routing decision becomes a critical engineering challenge. Satellites also experience extreme thermal cycling: in low Earth orbit, a satellite passes through Earth's shadow every 90 minutes, swinging from direct solar heating (+150°C on sun-facing surfaces) to deep cold (-150°C in shadow). These repeated thermal shocks stress solder joints, crack circuit boards, and fatigue mechanical connections. Components must be designed to survive tens of thousands of these cycles over a satellite's operational life.

THE POWER EQUATION: SOLAR IS ABUNDANT BUT NOT FREE

Space offers virtually unlimited solar energy — no clouds, no night (in certain orbits), no atmospheric absorption. SpaceX's plan places satellites in sun-synchronous orbits where they receive sunlight more than 99% of the time. But converting that solar energy to usable computing power involves significant losses. Current space-grade solar cells achieve roughly 30-32% efficiency. Power conditioning, voltage regulation, and distribution add further losses. And crucially, every watt that powers a chip eventually becomes waste heat that must be radiated away — bringing us back to the cooling problem. SpaceX claims their satellites can deliver 100 kW of power per metric ton allocated to computing. For a constellation producing meaningful AI compute, the total power generation and thermal management systems would be staggeringly large.

THE LATENCY AND BANDWIDTH BOTTLENECK

AI training workloads require massive data transfers between GPUs — modern training clusters use 400 Gbps to 3.2 Tbps InfiniBand connections with sub-microsecond latency. Orbital satellites communicate via laser optical links. Current Starlink satellites support up to 200 Gbps per laser, with next-generation hardware targeting 1 Tbps. But the speed of light imposes a hard floor: even at 500 km altitude, the round-trip latency to ground is approximately 3.3 milliseconds — orders of magnitude slower than the sub-microsecond latency in terrestrial data centers. This makes space-based compute potentially viable for AI inference (running trained models) but extremely challenging for training (which requires constant high-bandwidth synchronization between thousands of GPUs). SpaceX envisions the constellation handling inference workloads for autonomous vehicles, robotics, and distributed AI applications rather than replacing terrestrial training clusters.

STOCKS AND MARKETS DIRECTLY IMPACTED

The orbital data center narrative is already moving markets. Here are the key companies and tickers investors are watching:

Launch Providers:

• SpaceX (Pre-IPO, expected Q2 2026) — The linchpin of the entire ecosystem. Valued at $1.25 trillion after the xAI merger. An IPO could raise $25B+ and would be the most significant space stock listing in history.

• Rocket Lab (NASDAQ: RKLB) — The most elastic pure-play launch stock. Vertically integrated (rockets, satellite buses, components). Order backlog exceeds $1B. The 2026 maiden flight of the Neutron rocket is a key catalyst.

• Blue Origin / New Glenn — Jeff Bezos's company targeting first orbital flight in 2026. Not yet public but a major future competitor.

Satellite & Space Infrastructure:

• Redwire (NYSE: RDW) — Builds satellite components, avionics, deployable structures, and power systems. Revenue from NASA, U.S. military, and commercial partners.

• BlackSky (NYSE: BKSY) — Most direct beneficiary of in-orbit AI computing. Already uses onboard AI for real-time satellite imagery analysis.

• Planet Labs (NYSE: PL) — Announced 'Project Suncatcher' with Google to explore AI computing in orbit.

• Mynaric (NASDAQ: MYNA) — Makes laser communication terminals critical for inter-satellite data links.

Chip & Hardware Enablers:

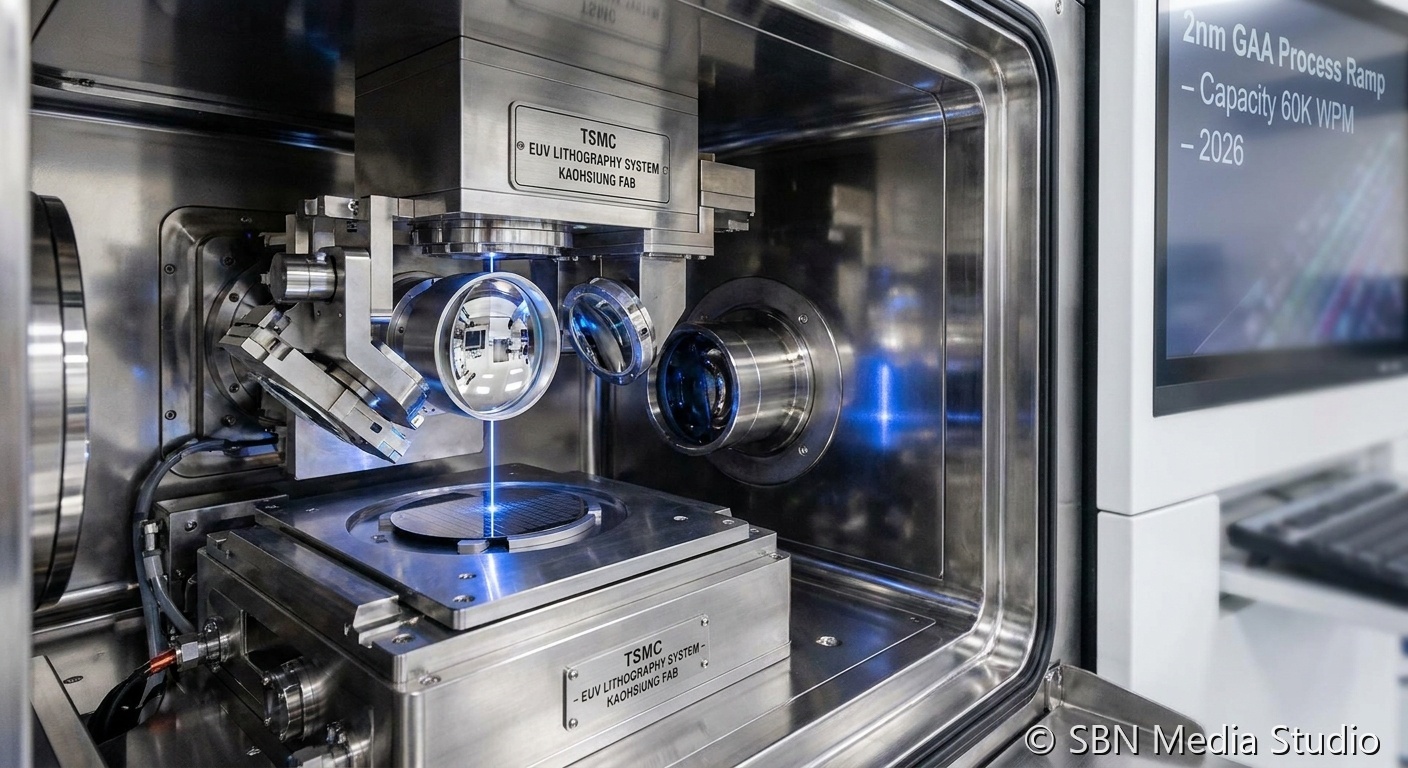

• NVIDIA (NASDAQ: NVDA) — Already flew an H100 GPU in orbit on the Starcloud-1 satellite. Jensen Huang publicly endorsed space computing at GTC 2026.

• Microchip Technology (NASDAQ: MCHP) — Supplies radiation-hardened processors and FPGAs for space applications. Partner on Axiom's orbital data center node.

• Marvell (NASDAQ: MRVL) — Provides custom silicon and optical networking chips that could power inter-satellite communication.

Cooling & Thermal Management:

• II-VI / Coherent (NYSE: COHR) — Advanced materials for thermal management and laser communications.

• Boyd Corporation (Private) — Thermal management solutions being adapted for space applications.

Space Computing Startups (Private):

• Ramon.Space — Supplies radiation-hardened processors to ~50 space missions including OneWeb and ESA/NASA. Planning prototype orbital data center launch in 2026-2027 with Ingrasys.

• Axiom Space — Building the world's first commercial space station with integrated orbital data center nodes. Delivered AxDCU-1 to the ISS via SpaceX in August 2025.

• Aetherflux — Founded by Robinhood co-founder Baiju Bhatt. Raised $50M Series A from Andreessen Horowitz. Combines orbital compute with power-beaming technology.

• Sophia Space — Developing orbital computing platforms for defense and commercial applications.

THE DEFENSE ANGLE: WHY GOVERNMENTS ARE PAYING ATTENTION

The U.S. government is a major catalyst. President Trump signed Executive Order 'Ensuring American Space Superiority' on December 18, 2025 — the first directives for a 'Space Silicon Valley.' The Pentagon views orbital computing as critical for real-time battlefield intelligence: satellites that can process imagery and sensor data onboard, delivering actionable intelligence in seconds rather than transmitting raw data to ground stations for processing. Lockheed Martin generates roughly $13 billion in annual space revenue, and defense primes like L3Harris and Northrop Grumman offer exposure to government-backed space computing demand with more predictable cash flows than pure-play space startups.

THE REALISTIC TIMELINE

Despite the hype, the physics constraints are real. Here's what experts actually project:

• 2026: Starcloud-1 demonstrated an NVIDIA H100 running in orbit. Axiom's AxDCU-1 operates on the ISS. Ramon.Space flies early prototype samples. Proof-of-concept phase.

• 2027: Industry moves toward active thermal control with space-rated heat pumps. First dedicated orbital data center nodes from Axiom and Ramon.Space. Multiple new reusable rockets (Neutron, New Glenn, Terran R) operational.

• 2028-2030: Early commercial services for specific workloads (defense intelligence, Earth observation AI, edge inference). Still small-scale — single-digit percentage of global AI compute.

• 2030-2035: Deutsche Bank estimates orbital data centers 'reach close to parity' with terrestrial costs. Google's feasibility study suggests cost-effectiveness if Starship launch costs reach $200/kg with 180 launches/year. Scaling begins in earnest.

• 2035+: Potential for meaningful orbital compute capacity. Full-scale constellations. But even the most optimistic projections show space handling a single-digit percentage of total global AI workloads before this date.

Musk predicted orbital data centers will be more cost-effective than Earth-bound ones 'within two to three years.' Most independent analysts place that crossover well into the 2030s.

THE BOTTOM LINE

Space-based data centers represent one of the most ambitious convergences of AI, aerospace, and semiconductor technology ever attempted. The physics challenges — cooling without convection, radiation destroying chips, extreme thermal cycling, latency limitations — are each individually formidable. Together, they define an engineering frontier that will take a decade or more to conquer at scale. But the enabling technologies are advancing rapidly: reusable rockets are slashing launch costs, laser inter-satellite links are approaching terabit speeds, and the first AI chips have already computed in orbit. The question isn't whether data centers will operate in space — early prototypes already do. The question is whether orbital compute can scale fast enough and cheaply enough to matter before terrestrial alternatives solve their own energy and cooling constraints. For investors and industry watchers, the space data center race isn't about picking the winner today. It's about understanding that an entirely new computing paradigm is being born — one that will create winners across launch, chips, thermal management, laser communications, and space-hardened electronics. The companies building the picks and shovels for this gold rush may prove to be the smartest bets of all.

Sources

SpaceNews, Scientific American, DatacenterDynamics, EE Times, CNBC, Goldman Sachs, InvestorPlace, Axiom Space, Ramon.Space